Works cited

The Illustrated transformer

Alammar, J (2018). The Illustrated Transformer [Blog post]. Retrieved from https://jalammar.github.io/illustrated-transformer/

|

This is a CS blog post by Jay Alammar explaining how a transformer machine learning (ML) model works from a visual and conceptual perspective, without diving into the heavy mathematics that hold up the concept. The transformation process is the backbone of the newly developed stable diffusion model architecture that we are using to generate images in our app, so we used this article as a way to gain a better understanding of how words and sentences can be transformed into different data types. In our case, the language semantic data is transformed into an data matrix that is then used as an input for the image decoder. This transformer workflow then interacts with the technical content of our second resource: "The Illustrated Stable Diffusion".

|

The Illustrated Stable Diffusion

Alammar, J (2022). The Illustrated Stable Diffusion [Blog post]. Retrieved from https://jalammar.github.io/illustrated-stable-diffusion/

|

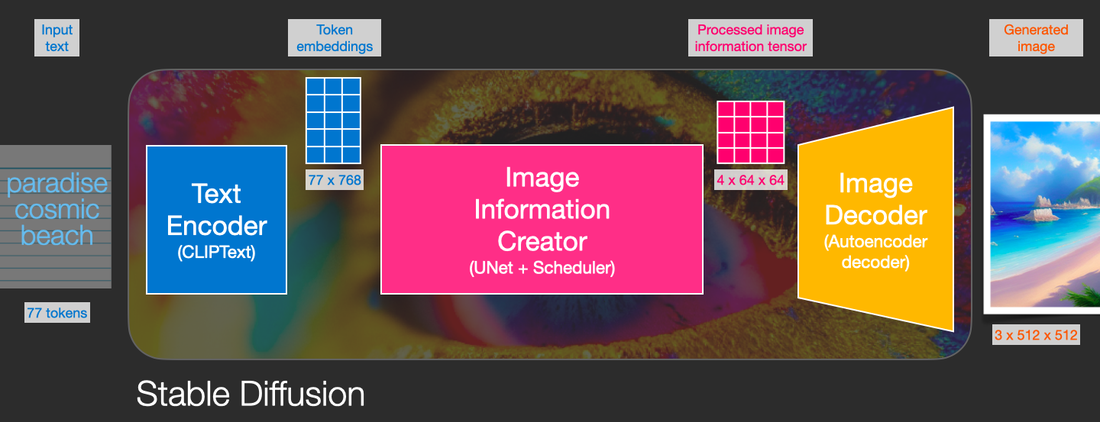

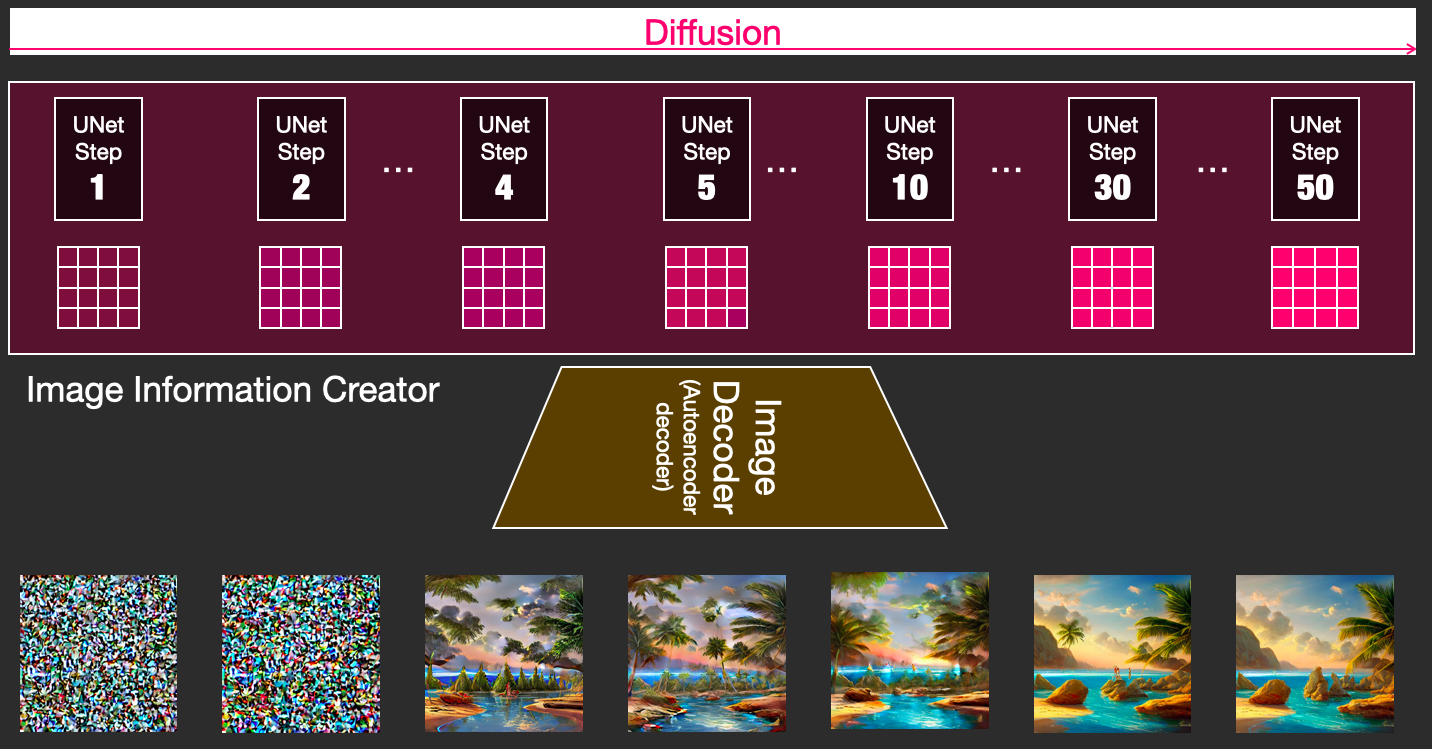

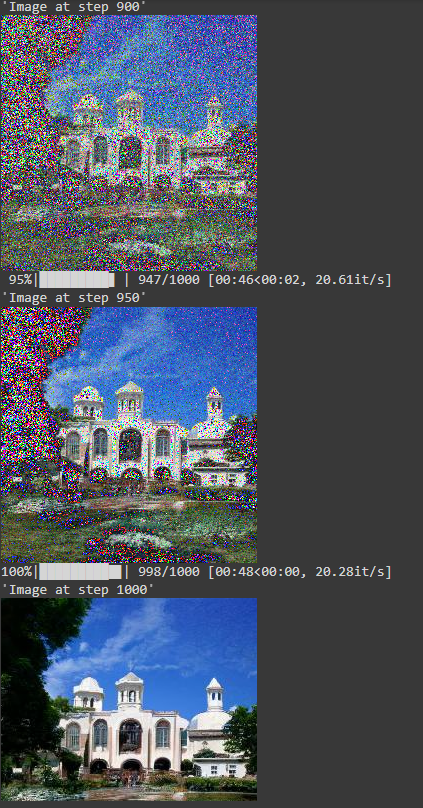

This is a CS blog post by Jay Alammar visually explaining one of the newest (2022) developments in machine learning research: stable diffusion models. We won't be training our own model, as that would take time and ML expertise that we don't have. We'll be implementing this model in our iOS app through Apple's CoreML library, which imports a python-based model hosted online on HuggingFace into Swift and optimizes it for mobile hardware. The model follows the general outline of the transformer outlined above. There is a text encoder that creates token embeddings, which transforms the input text ("paradise cosmic beach") into an information matrix. The matrix is then processed through the image information creator, which outputs a tensor matrix into the image decoder. This process can be visualized through the image on the bottom, in which the information matrix is decoded into an image that loses noise each time it goes through a round of diffusion. We hope to show this cool visualization of the step-by-step ML diffusion process through a view in our app to help our users gain a greater understanding of how stable diffusion works. If we have time, we may do some fine-tuning to specialize the image generation to album cover art.

|

diffusers, Stable diffusion huggingface Colabs

HuggingFace, Google Colaboratory (2022). https://github.com/huggingface/diffusers, https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb#scrollTo=PzW5ublpBuUt

https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_diffusion.ipynb#scrollTo=gd-vX3cavOCt

https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_diffusion.ipynb#scrollTo=gd-vX3cavOCt

|

This Google Colaboratory file from HuggingFace, an open source database that hosts machine learning models, gives an interactive example of diffusion models. We used this source in order to gain a greater understanding of the functionality behind the models that we read about in previous blog posts. The image on the left shows one of those examples, in which the data from a pre-generated noisy image is recreated to become a visually pleasing image. The diffusion process yields little visual progress until about step 800, when the structure of the white building begins to form in the background of the noisy image. On the left you can see steps 900, 950, 1000 of the diffusion process, when visual improvement is most apparent.

The pre-generated image that we remove noise from will be formed from text inputs in our app, including song title, album title, etc. To experiement with how this would work, we used another of HuggingFace's diffusion Colab files, this time for stable diffusion. The prompt was "a photograph of an astronaut riding a horse", a classic prompt for image generation models. The results are shown in the second image on the left. Both of these Colab files left us with a greater understanding of the background behind the ML models we will be using CoreML to implement in our app. |

Apple COreML Package

Atila Orhon, Michael Siracusa, Aseem Wadhwa. Apple (December 2022).

https://machinelearning.apple.com/research/stable-diffusion-coreml-apple-silicon

https://github.com/apple/ml-stable-diffusion

https://machinelearning.apple.com/research/stable-diffusion-coreml-apple-silicon

https://github.com/apple/ml-stable-diffusion

|

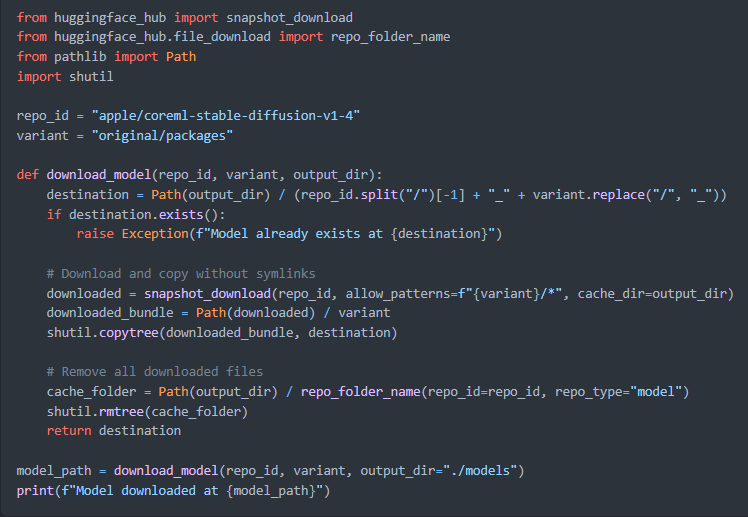

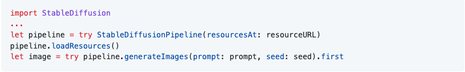

This Swift library contains the CoreML package which allows developers to generate images on their devices. The package dependency converts the PyTorch models into CoreML models using diffusers and coremltools. The swift package deploys these models and allows users to implement the image generation pipeline in Xcode. The CoreML models, which use the Hugging Face diffusers, are created from the PyTorch models and are used by the Swift Package to deploy image generation capabilities in their apps.

The package offers two important sections: Using ready made CoreML models from the Hugging Face Hub and Image Generation in Swift. The first section gives a run down of how to use terminal to download the necessary CoreML files to run stable diffusion for an app. The image generation step explains a basic implementation to generate an image using a text prompt in swift. One other important section on the GitHub is the example Hugging Face app which provides a Sample App with a full stable diffusion implementation that allows a developer to understand how to integrate the models into an iOS app. |

Peter Schorn's Spotifyapi

Schorn, Peter. "SpotifyAPI." GitHub, 2021, github.com/Peter-Schorn/SpotifyAPI.

https://github.com/Peter-Schorn/SpotifyAPI

https://peter-schorn.github.io/SpotifyAPI/documentation/spotifywebapi/spotifyapi

https://github.com/Peter-Schorn/SpotifyAPI

https://peter-schorn.github.io/SpotifyAPI/documentation/spotifywebapi/spotifyapi

|

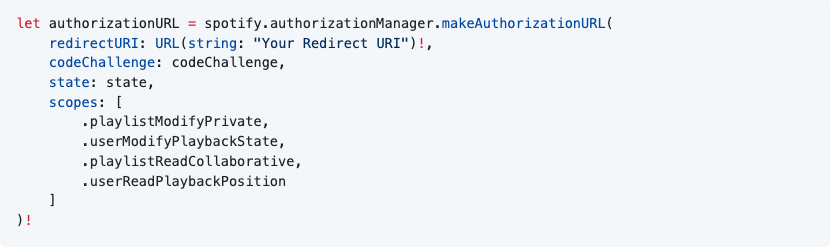

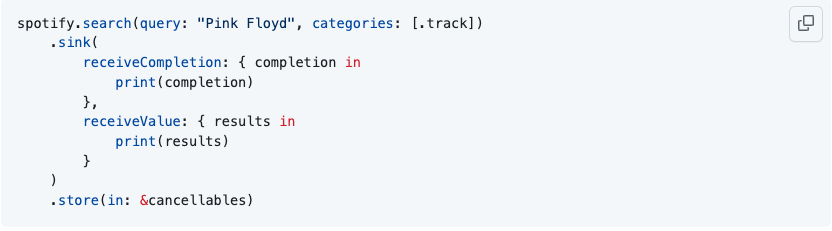

This GitHub package allowed us to fully integrate Spotify functionality into the App. Schorn utilizes Spotify's tools to allow developers to use the API to access user information and apply it however they please in their apps. Our app utilizes his Authorization functionality as well as the search query, and albums and playlist access. The authorization functionality allows the user to sign into Spotify using a third party source and then saves the login information to the app's saved data so that a user does not need to log in when they enter the app again. The search query allows a user to search for an album or a playlist so they are not just restricted to their own saved albums and playlists. The saved albums and playlists are another functionality provided by the API. The albums object provided by the API gives us access to the tracks, artist(s), and album cover, which allows us to fully displays these features to the user. The playlist object provides similar features. The documentation for this GitHub repo allows the developer to search for any functionality they may want to implement and explains what the functions do and how to go about implementing the available functions into the app.

|