|

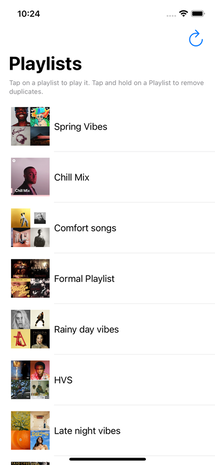

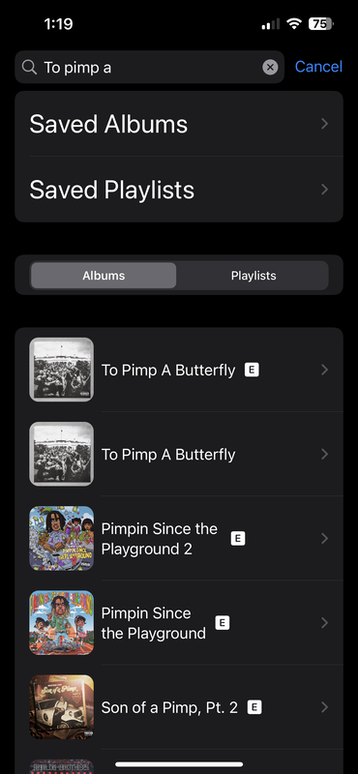

Progress Update: Since the last PSU, we’ve continued to work on finishing up the user interface and app design. One thing that we had meant to do since the start of the project was implementing a search bar with access to Spotify’s entire expansive library in case you wanted to look up an album/playlist on a whim instead of being restricted to your currently saved library. We’ve since implemented this feature, allowing people to search the entire Spotify library on the LibraryView screen after signing in through Spotify. We’ve also implemented a symbol beside each album/playlist/song that shows an explicit tag for whether the song/music collection has curse words in it. That way, it’s easier to differentiate between two albums that look the same, but are really just two versions of the same album: the original version, and the clean version. We’ve also been working on AlbumView and have decided to merge AlbumView with GenerateView, as it’s more intuitive to keep all of the options and songs for generation on one view so a user can keep track of everything about their generation at once. Apart from programming, we had another meeting with Anushka, our mentor, and gave a rundown of our progress and our roadmap for the next two weeks. We also have created a rough draft of our presentation, which we shared with Ansuhka and got feedback on. Goals Until Project Presentation day (5/26):

We still have a lot of work to do before we get to presentation day. One goal that we weren’t sure whether we would be able to accomplish but have recently begun to urgently pursue is getting our app on the app store before presentation day. We want to be able to have it up before next Friday so that we can show the QR code at the end of our presentation and allow everyone to download the app and try it for themselves as we demo it. Luckily, we already have an App Store Connect developer account through my family’s NanoFlick LLC corporate account, which we’ve obtained permission to use to upload our app. Once we’ve completed a rough first build, we’re going to upload it to App Store Connect and await approval from an Apple authorization team (which usually takes under 48 hours). Our goal is to upload this first build by this Thursday, which we think we can accomplish. We’ve planned out our roadmap for the next couple days to accomplish this goal: Rehan will finish up LibraryView and GenerateView, and Danny will work on AlbumView and a light version of CompletedView without its full functionality, as well as create the App Store page for our app. Additionally, we’re going to do a graphic design workshop together where we come up with a name, logo, description, and a little bit of branding to market our app on the App Store. Once all of this work is completed, we hope to implement the finishing touches on our app, including the diffusion steps feature and the iOS share sheet to allow people to share their art creations. Finally, we need to update our presentation to respond to peer feedback from our rough draft presentation and to include updates from the past two weeks. Changes to Project: The only major change that we’re focusing on is pursing an App Store upload, which seems like a viable and desirable accomplishment that will help fulfill the purpose of our project by allowing many more people to access and use the app. Timeline Updates / Upcoming Issues: No major timeline updates. We’re on track to be ready with full functionality by presentation day.

0 Comments

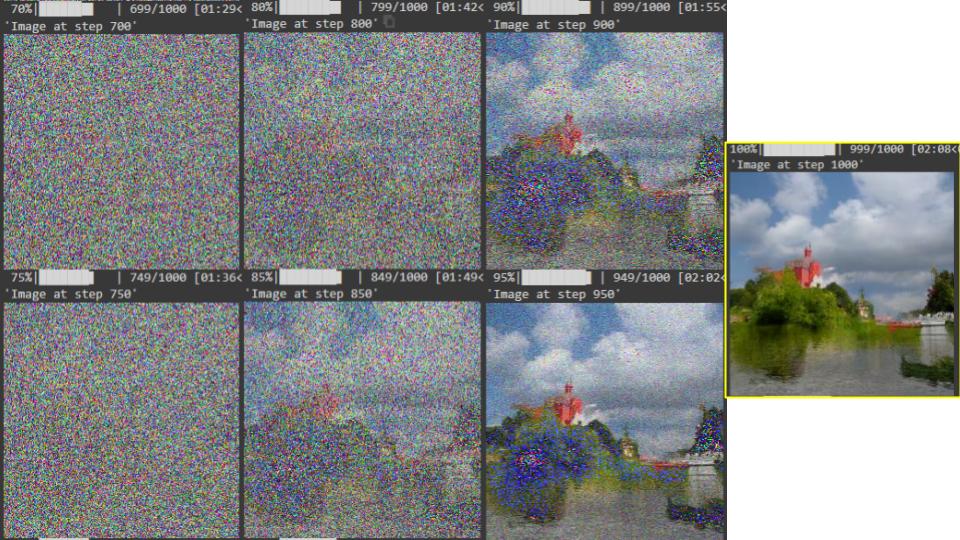

Progress Update: Our biggest update has been finishing up a good deal of our user interface. We have implemented the Library and Album Views from our Figma. We have gotten started on developing the Generate View and plan to use practice images as a way of testing. The images below show our current progress. After completing a large portion of our User Interface, Rehan decided to start experimenting with Stable Diffusion and generating images. As of right now this is purely for fun and just seeing how things work because we still have steps in between that we want to complete. The initial thoughts from testing it out was that memory is an important aspect to think about. In addition, it is a pretty time intensive process and only works on my computer as it crashed on my phone. It will be fun to continue implementing these models at a later time. On a different front, we had a meeting with our mentor Anushka for the first time at the beginning of April. It was good sharing all of our information in terms of our interests as well as the current state of our project and how we want to progress. She had some good insights in terms of how to progress as well as different things we can think of implementing once our initial goals are completed. Goals For May: Our goals for May are to first complete our user interface. Once we have our entire app designed without full functionality we will be completely ready to implement the stable diffusion models. We want to have a fully working prototype, which includes the stable diffusion model fully implemented and working on our phones. In addition, we want to complete our second interview with Jeff and continue meeting with Anushka to make sure we are on a good track and have someone to continually give us feedback on the way our project looks to others. Currently we are on pace to have a working prototype in May. Below is a sample generation for the album Kills You Slowly. Changes to Project:

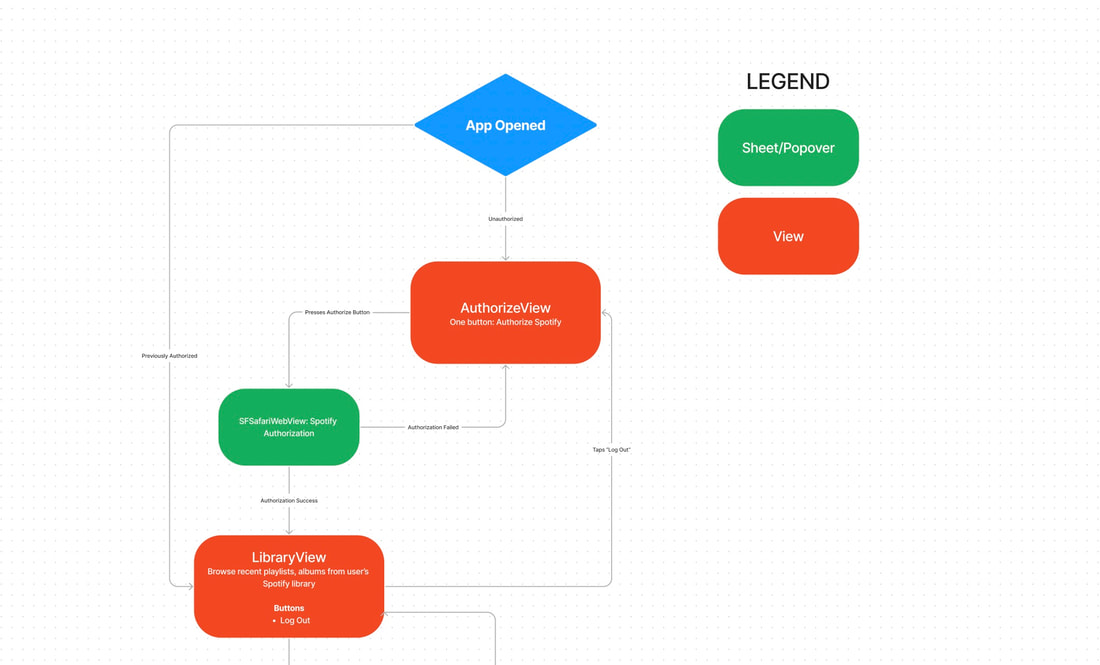

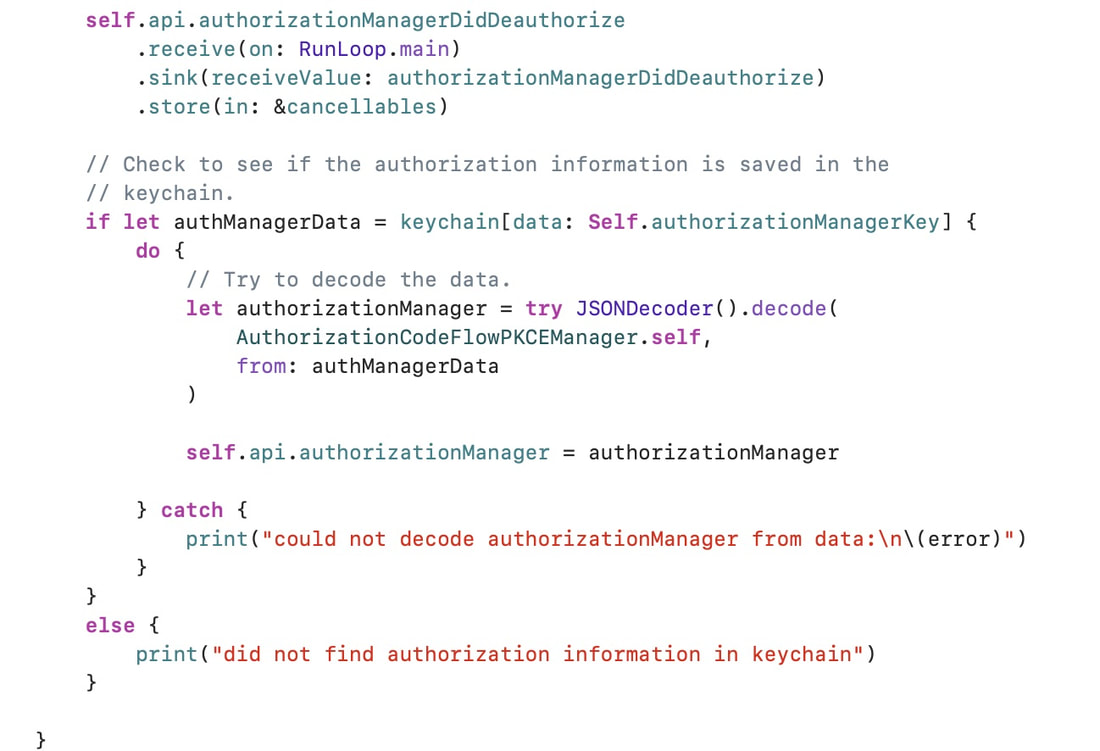

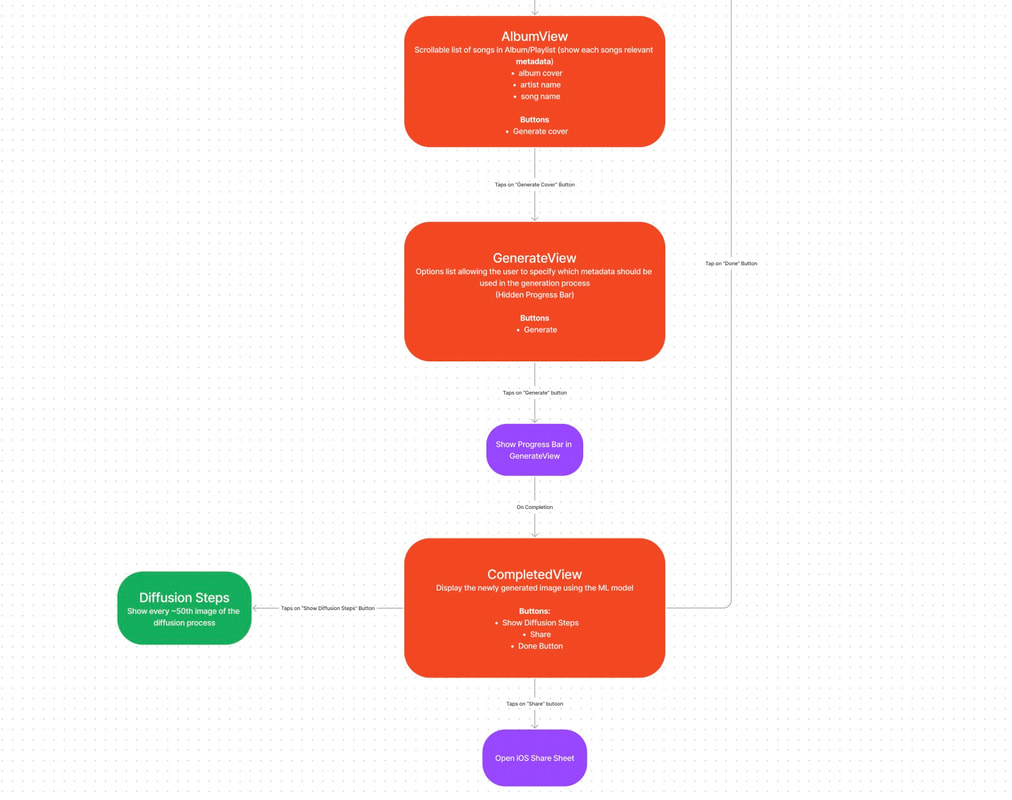

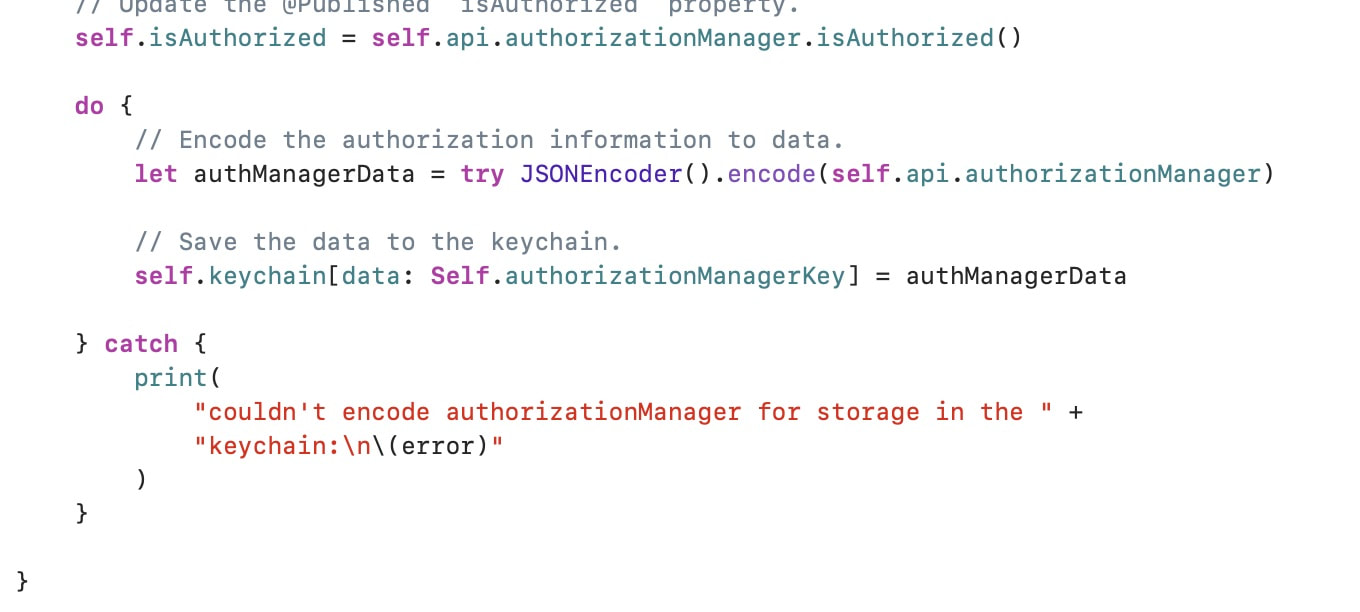

We have not really made any changes to our project. We have slightly updated our Figma in terms of what we want the design to look like. These tiny tweaks and updates have been pretty continuous in terms of design but overall functionality and goals of the project have continued to remain the same. Timeline Updates / Upcoming Issues: With AP Exams coming up and senioritis both of us have been catching up on a lot of work that we have been missing. Rehan has a little more time because his AP exams aren’t as difficult and he doesn’t have as much work to catch up on, so he will have a good amount of time to get some work done but it will definitely be at a slower rate. Danny has gotten us going in terms of development and has spearheaded a good amount of work for the project so we may take the whole time allotted to get the prototype working but it should be ready when the presentation comes time.  Progress Update: The biggest thing we have done since our last PSU has been conducting our first interview with Michael Gunn, the Masters CS student at UIUC. He was very insightful in giving us information as to how to navigate this project. We learned a lot about how to go about the different stages of our project in terms of designing the interface, implementing the model, and fine-tuning the model to fit the specifications of our project as a whole. You can watch the interview on the Interviews tab of our Weebly website. In terms of the programming aspect, the authorization capability is fully functional and we have saved the user in the device’s local storage so that a user does not have to sign in to their Spotify every time they open our app. The snippets of code and animations for this project can be seen below. Along with completing the authorization capability, we have played around with the different functionalities Spotify’s API gives us. The biggest capability we have implemented is gathering the data for a users playlists and displaying this data to them on the app. In addition to the capabilities we found, we finished the design of our flowchart which gives a great template of what our entire app will look like. We are currently in the process of programming the user interface based on our flowchart in Figma. Overall, we have developed a good setup to design the app without functionality and then implement the machine learning model once the app has been designed. Goals For April:

Our goals for April is to first develop all the features we outlined in the flowchart into the actual app without full functionality. All this means is we will have buttons and views which will pop up but will not do anything at this point. From here we want to start attempting to implement the machine learning model of stable diffusion using Apple’s CoreML API. We want to have a small working model of stable diffusion so that in May we can do all the fine tuning and adjustments we need to do. In order to do this we will also have to continue looking at resources surrounding stable diffusion as well as projects and sources helping us with Apple’s implementation of the model. Based on our interview with Michael we learned that for such a project we want to ensure that we have a working model throughout each step so we can have a presentable outcome at the end of the school year. Finally, we also want to make sure that we complete our second interview in April. Changes to Project: As of right now, we do not have any changes to our project. We plan to continue with the current goals for our project. We did consider changing from an iOS app to a desktop app after our talk with Michael but we decided against it because Danny and I have more experience with iOS development. We are excited to continue on this project and hopefully we can finish it in time. Timeline Updates / Upcoming Issues: Coming off of basketball season has given me a lot more time to work and Danny has done a lot of work on getting required tasks done. We have one more interview coming up with Jeff Carmassi to give us a more comprehensive idea of the history of album covers and the importance of them today. We hope to complete that interview soon and we will only have our last two PSUs as well as the code to complete. In terms of programming we have made good progress and now that we have a flowchart for the design we will be able to get a lot more work done on the coding side. Currently no issues have really come up and things are going by smoothly. Progress Update One of the first things we’ve done since the last status update was creating our XCode project and syncing our progress in the cloud with GitHub, a version control host. To view our code progress, you can view the latest version through this repository link that we will be putting at the bottom of every PSU and on our front page. Additionally, we will be creating a README file so that people that find our repository on GitHub can learn about our project there. We decided to make the repository public instead of private for a few reasons: it will allow a greater degree of transparency, as anyone on the web can view our exact Swift code and track our progress; it will increase user freedom and customizability, as people can freely download our code and make adjustments/improvements as they please; it will also allow for community fixes, as people can create their own pull requests with improvements or bug fixes that they’ve coded themselves that we can review and approve. After creating the project, we started implementing the authorization process required to integrate Spotify’s wide and curated library of music into our app. Instead of using Spotify’s given iOS Software Development Kit, we decided to use Peter Schorn’s SpotifyWebAPI package, which allows us to use the expanded functionality of Spotify’s web development client, including the abilities to upload custom images and read and write to user playlists. As of right now, we’ve programmed the Spotify authorization and deeplink capability (see below) and are currently working on post-authorization data retrieval. On the ML end of our program, we’ve been researching ways to host a stable diffusion model locally on Apple Silicon, such as those previously mentioned on HuggingFace. Local hosting (as opposed to cloud hosting) will allow us to run the model directly on people’s mobile devices without the costs associated with a unified computing server that runs the model. However, one challenge that comes with this approach is that the ML model we would hope to run requires huge amounts of storage, of an order of magnitude that outclasses the total storage of any current IPhone. Luckily, Apple has addressed this issue through its proprietary CoreML Software Development Kit (Stable Diffusion with Core ML on Apple Silicon). This will reduce the storage required and streamline the computing process of our model on people’s phones, as well as make it much easier for us to implement our model. We've reached out to Jeff Carmassi, a Masters CS student at the University of Illinois at Urbana-Champaign with experience in AI and image generation research, for an interview. We've also found a mentor with experience in software engineering in Anushka Narvekar, and have filled out our completed mentor form here!

Goals For March Over these next few weeks, we’ll be working on designing and programming our user interface. As stated in our project proposal, one of our priorities for this app is accessibility, as we want to put the creative tools of AI in the hands of everyone with minimal technical hassle. Our UI design will contain a flow chart from SwiftUI View to View (think of Views as the different pages of an app), so look out for that diagram in our next PSU! By the end of the month, we’re shooting to have all of the UI structure programmed without fleshed out View models. For example, all of the buttons on one view will be programmed, but we will work on their actual function in the future. On the backend side of our app, we’re finishing up gathering user data from the Spotify cloud database after authorization. We hope to finish that within the next week. If we finish all of these tasks before the start of our next PSU, we’ll start diving into CoreML implementation and programming using Apple’s stable diffusion on CoreML package on GitHub. That is the most daunting and time-consuming task that stands ahead of us in the near future. To fulfill our senior project requirements, we hope to schedule an interview with Korinn’s contact who can help us learn more about the history of album covers as a form of artistic expression. We want to complete this interview before we begin fine-tuning our model to ensure that we can use insights about the importance of different aspects of album cover art when training our model to best represent the artist’s vision from the information available. We also hope to meet with our mentor at least once in the next few weeks to hear his input on our sense of direction with the project and our UI design and flow chart (once completed). Changes to Project We haven’t made any changes to our project or our scope since the last update. Developing for iOS on XCode seems to still be the best path forward with the most open-source support infrastructure around it. One thing that we think we should clarify is that our final product will not be able to accurately include text in the generated art, as the existing stable diffusion models that we’re going to use aren’t optimized for natural language integration based on the text prompt. Timeline Updates / Upcoming Issues We should be able to move at a faster pace in these next few weeks, as Rehan has finished the long hours of the basketball season and has more free time to work on the project. Other than that, our timeline has remained the same. Self-evaluation score: 25/25.

Calendar: www.notion.so/albumcovergenerator Progress Update In the past two weeks, we have started to deeply research the technical aspect of our project. Through the help of Jack Youstra, we have received numerous different sources to review that provide detail into how the Transformer and Stable Diffusion models that we are using work from a technical perspective. We have used these resources to get a good understanding of the theory and fundamentals behind Stability AI, the primary AI package we are using for our project. In addition, we have read and run through two different Google Colab files (Diffusers and Stable Diffusion) that explain the code surrounding the idea of the Stable Diffusion model architecture line-by-line. Finally, we completed the Networking 101 worksheet to brainstorm how to find qualified and helpful interviewees, and we created a calendar on Notion to plan and track our progress. A visual example of how a diffusion ML model works from the “Diffusers” Colab (HuggingFace). We train the model by giving it an image and adding noise to the image over many intervals. That way, it can learn how images filled with nothing but noise can be reconstructed to create an actual visually appealing picture. This picture shows the step-by-step denoising process, with the result highlighted on the right. Goals for February Now that we have a solid foundational understanding of the model itself, we’re going to shift our focus to researching the practical application of the Stable Diffusion model. After weighing the pros and cons of programming and hosting an ML model on the web versus on mobile, we’ve decided that, at least for the initial prototype, we will be focusing on programming for iOS only through Swift and SwiftUI. Over the next two weeks, we want to continue reading research articles and programming blogs, this time focused on Apple’s implementation of Stable Diffusion on their mobile iOS devices. Additionally, we want to start playing with Apple’s CoreML Application Programming Interface (API), which easily integrates all of the convoluted details of creating a base machine learning model for mobile, and try to implement it in XCode. This way, we can see how this theory goes to practice and how it works with our own data so we can understand how to correctly tune the parameters. We also want to finish creating our calendar on Notion so we can finalize the spec of the project, as well as create our GitHub code repository, which will allow us to begin building the codebase simultaneously and synchronously. Our first programming goal is to create a basic initial user interface where we can import album and song metadata (album name, song titles, etc.) through Spotify/Apple Music/SoundCloud APIs. To fulfill senior project obligations, we also will work to find a mentor by the end of the month. Changes to Project As of right now, we don’t plan to make any major changes to the project, as we think we are in a good place with respect to work and timeline. The only minor change that we have made after an initial round of research is to target iOS development instead of web development or an all-in-one package through software like Expo or React Native. This way, we can run the model natively on user's devices instead of either making them download large files off the web to run it locally, or making us incur the cost of hosting the model on a complicated cloud computing service. Additionally, we have much more experience coding in Swift and XCode than using other languages and programming software. Timeline Updates An upcoming issue could be the time factor. There is a lot of research and implementation we need to do which will take a while, especially given the senior retreat coming at the end of this week and the February break thereafter. We also need to create a clean, accessible, and functional application interface after we finish fine-tuning the ML model, so time efficiency will be crucial. Self-evaluation score: 25/25. Exemplary in all categories😎

Calendar: www.notion.so/albumcovergenerator (this link will work for [email protected]) |

Danny Youstra &

|

||||||

RSS Feed

RSS Feed